There was a point where it became obvious that flowenricher had outgrown its original shape.

It started as an engine: ingesting NetFlow/IPFIX, enriching traffic with ASN, GeoIP, BGP path, PTR and SNMP data, running detections, and handling mitigation/blackhole logic. The backend side was already doing serious work. But the operator experience around it still belonged to an older world: external dashboards, separate views, extra moving parts, and too much friction between “data exists” and “this is actually easy to inspect in production.”

That was the real turning point.

The problem was never only about graphs. It was about workflow.

When you are operating something like this in practice, you do not just want a collector and a database. You want to answer questions quickly:

What is this IP?

What ASN is behind it?

What path is it taking?

Was it seen before?

Has it triggered detections?

Was it blackholed?

What does its recent history look like?

How does this ASN compare to previous snapshots?

What changed since yesterday?

We already had the engine. What we did not fully have was a native interface built around those questions.

That is where the transition from flowenricher to Argus really began.

Argus is not just a rename. It is a change in direction.

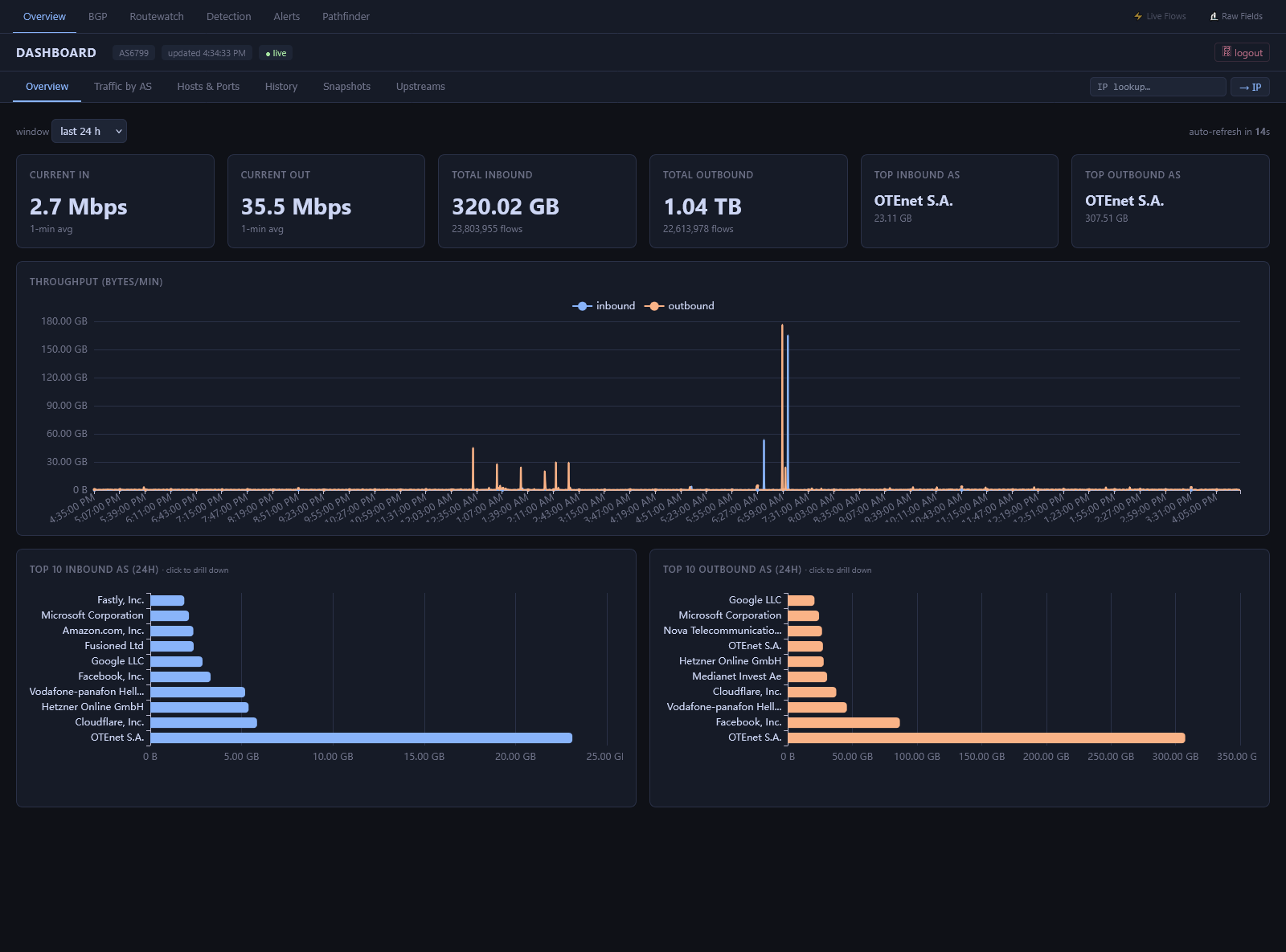

Instead of treating the UI as something external, or relying on the old pattern of “backend here, visualization elsewhere,” we moved toward a model where the platform owns the whole experience. The repo now describes Argus as a self-contained Go-based NetFlow/IPFIX enrichment, detection and mitigation engine with an embedded dashboard, SQLite-backed state, and no need for external databases for its core workflows.

That shift matters a lot more than it sounds.

It means the system is no longer just producing telemetry for something else to visualize. It is becoming its own product.

A big part of that evolution can actually be seen in the recent PR history.

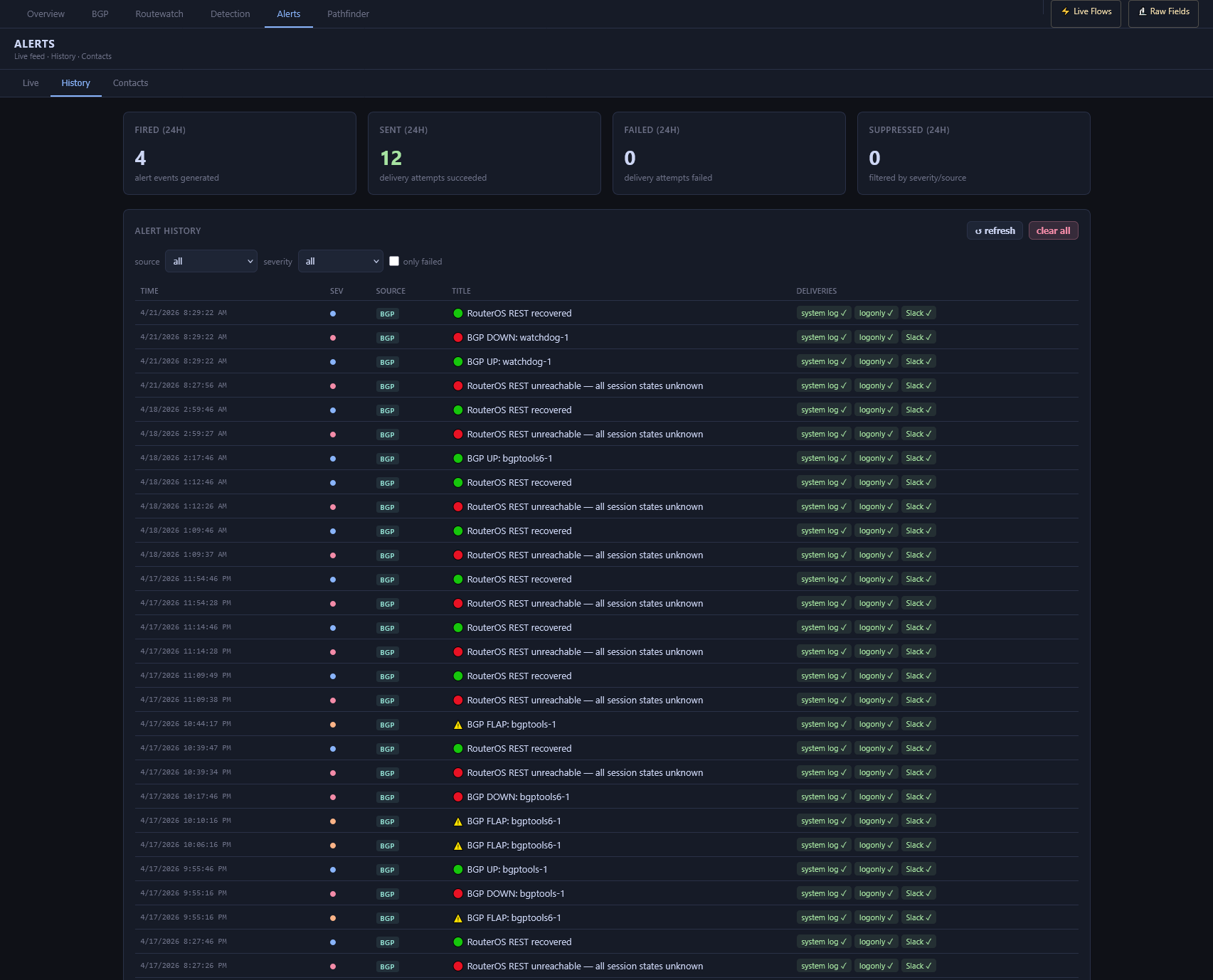

One of the first important changes was auth/session handling. Argus added support for goauth with an optional separate session_db_path, so session writes can live in their own SQLite file instead of fighting with everything else. That is a small detail on paper, but in a real system it is exactly the kind of thing that makes an embedded UI feel robust instead of fragile. The current startup path wires goauth directly in cmd/argus/main.go, and the repo documents the separate session DB option in config as part of the platform design.

From there, the UI story accelerated.

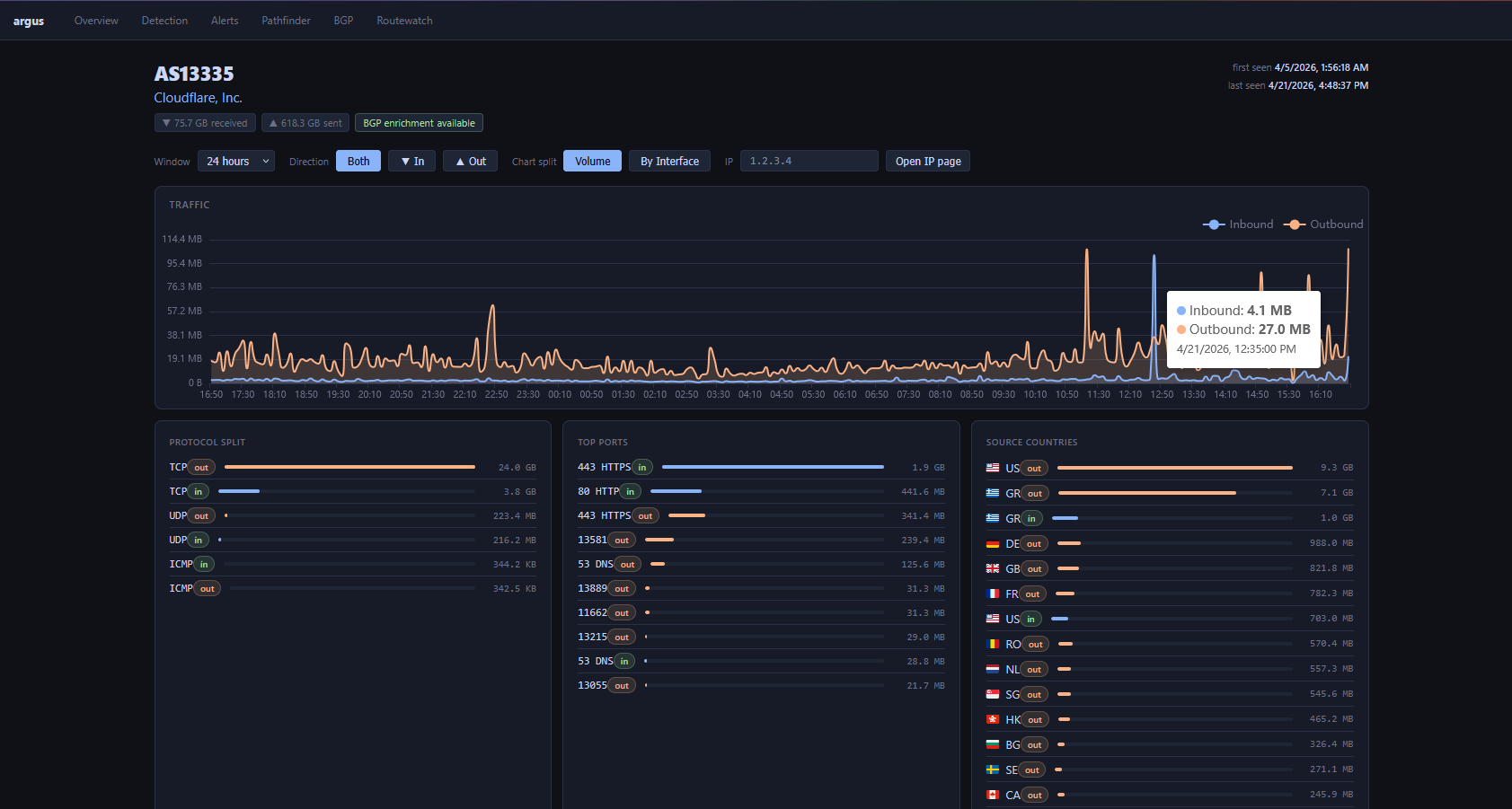

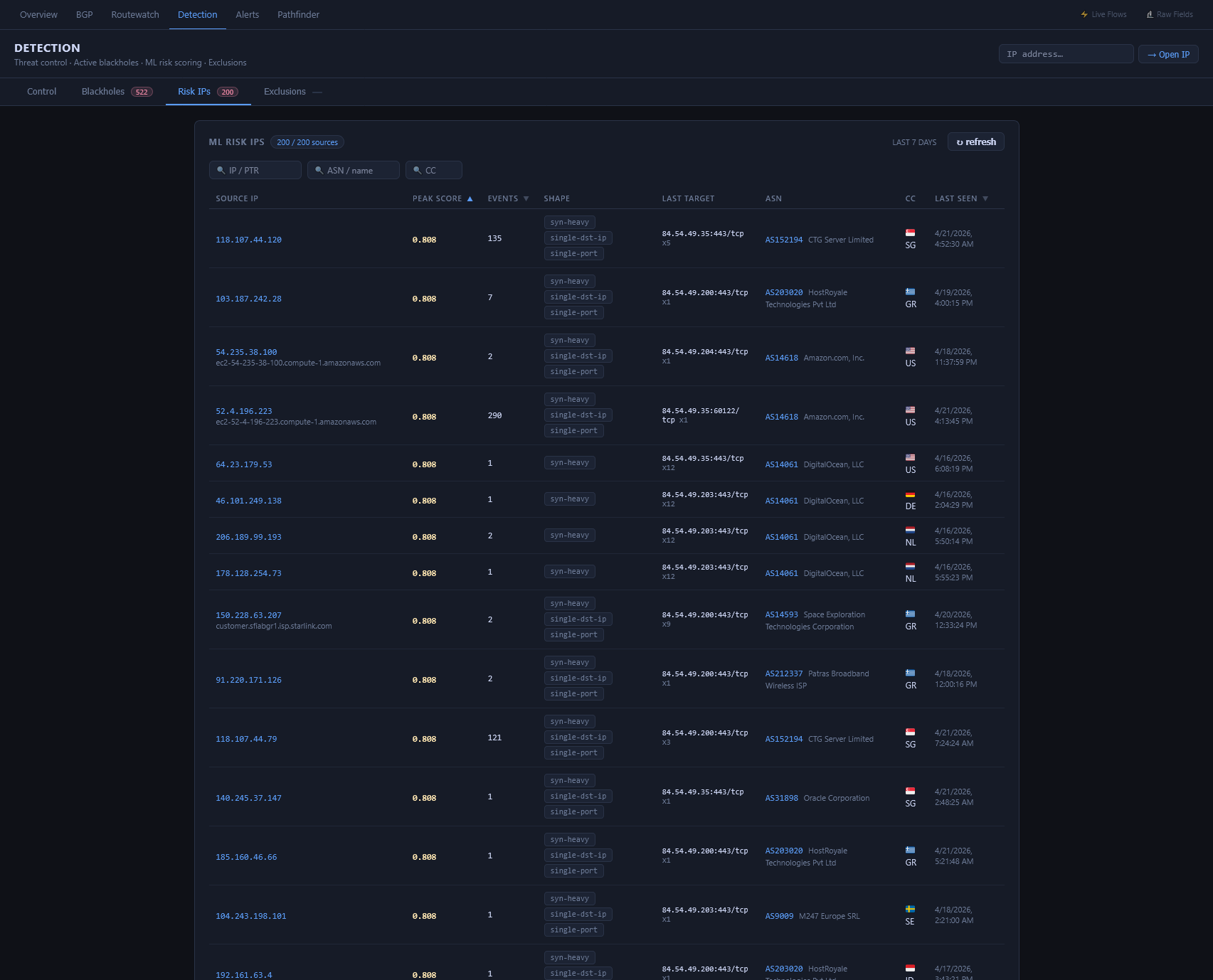

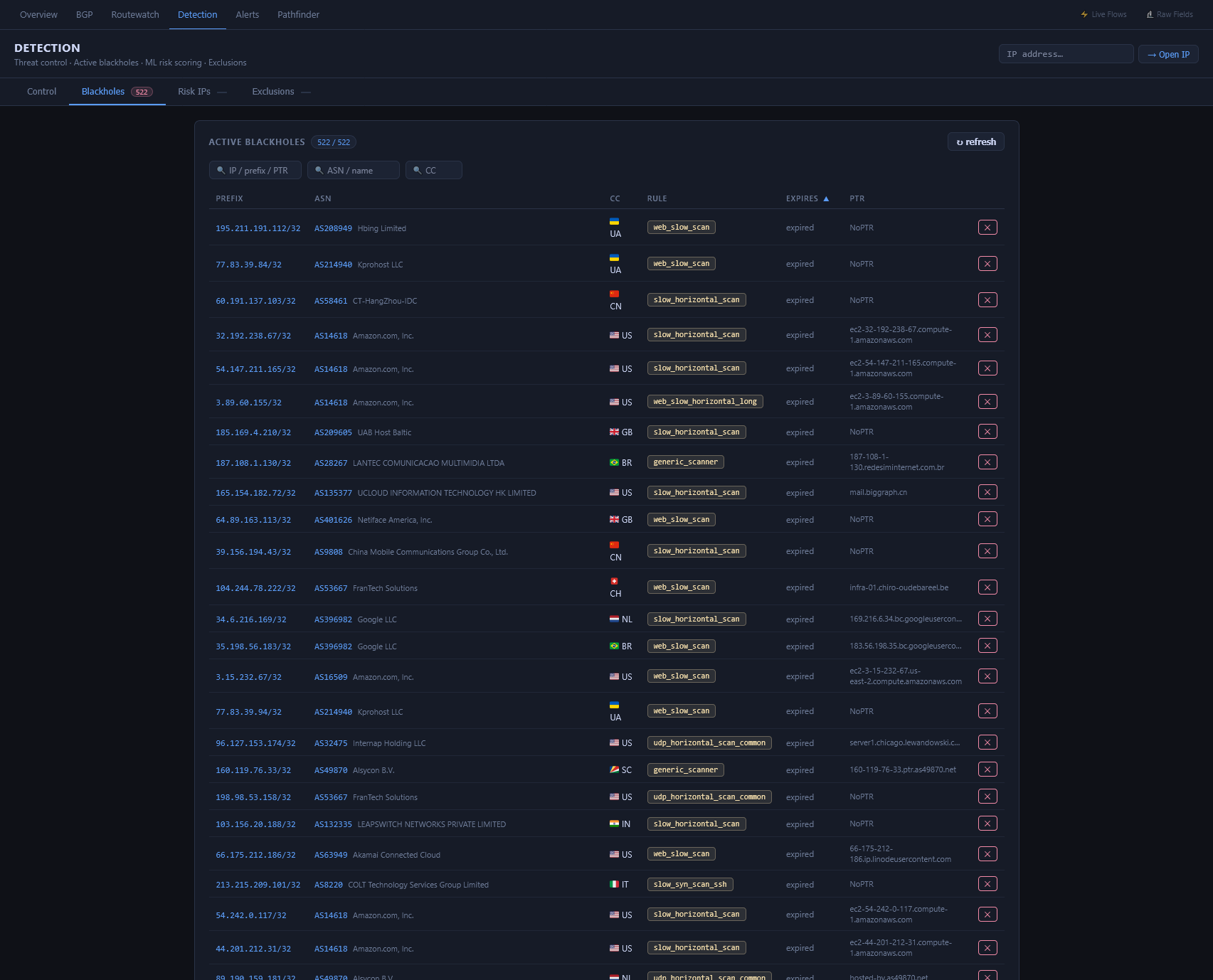

Recent work added a dedicated IP page, deep links across the UI, blackhole state visibility, detection history, and then a SQLite-backed IP profile cache with stale refresh and history enrichment. That is an important architectural step because it moves the platform away from “lookup everything every time” and toward a model where operational context is cached, retained, and immediately available. In other words, the interface starts feeling fast and stateful, not disposable. The code changes around internal/api/server.go, internal/api/ip_profile_cache.go, and the embedded static pages show that clearly in the repo structure.

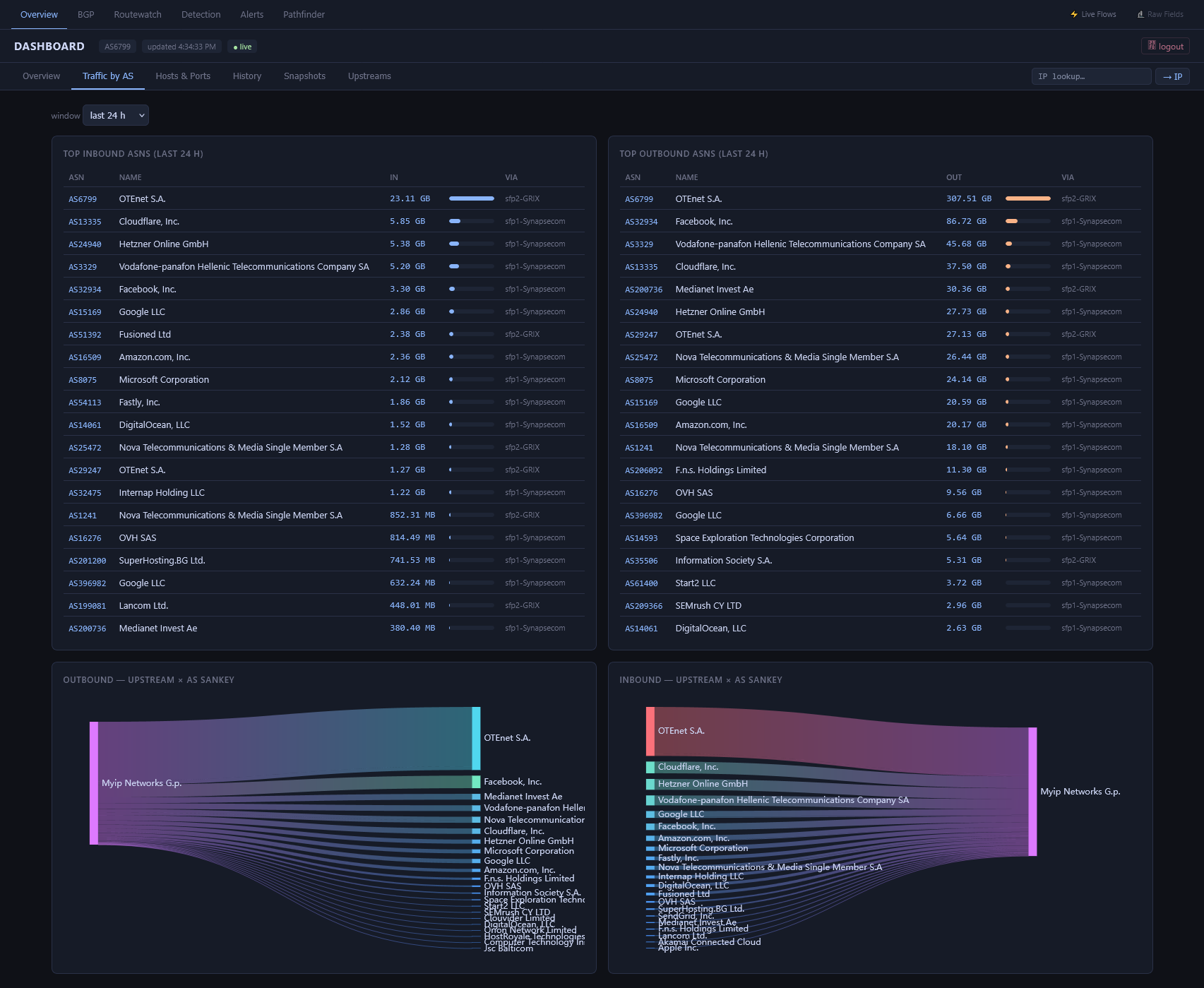

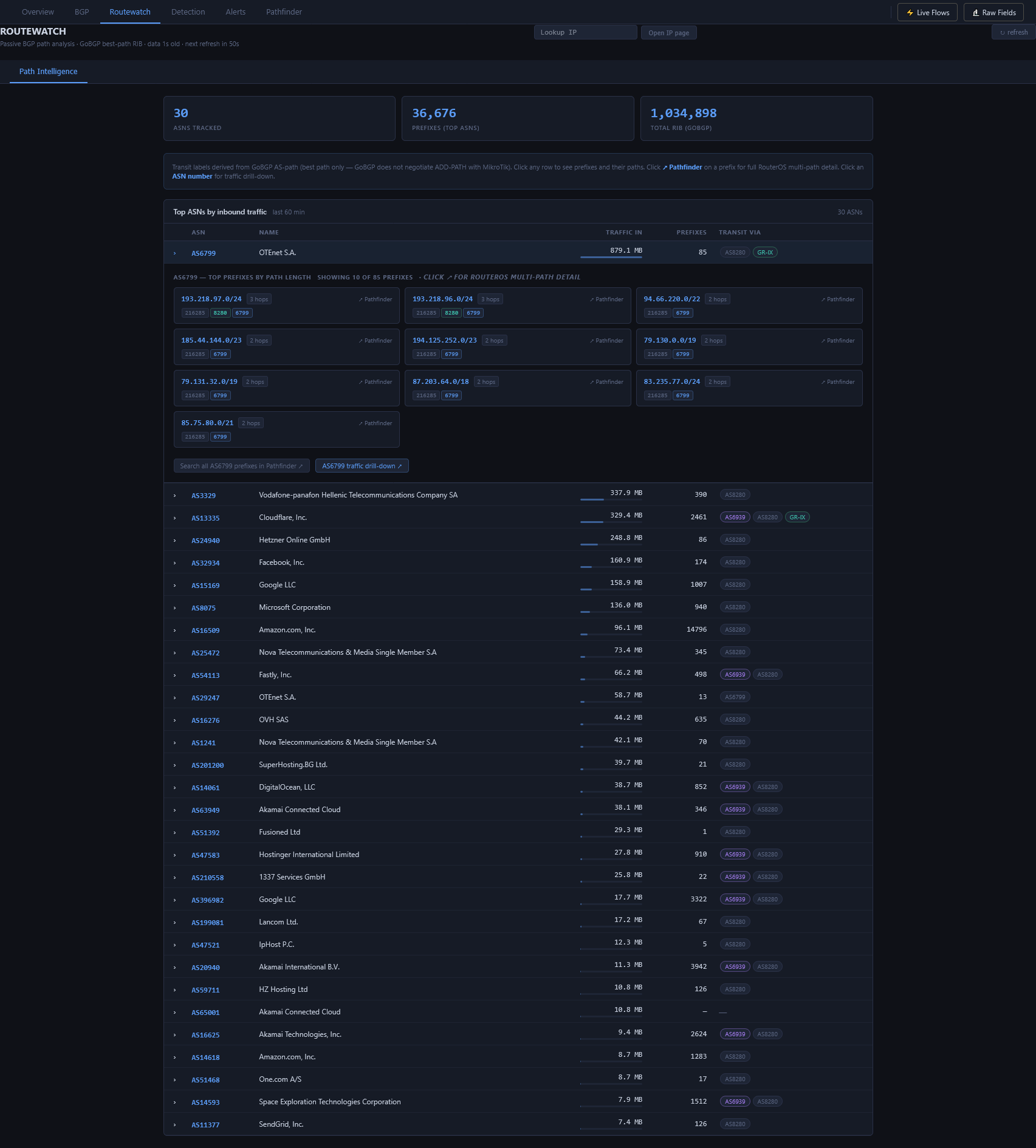

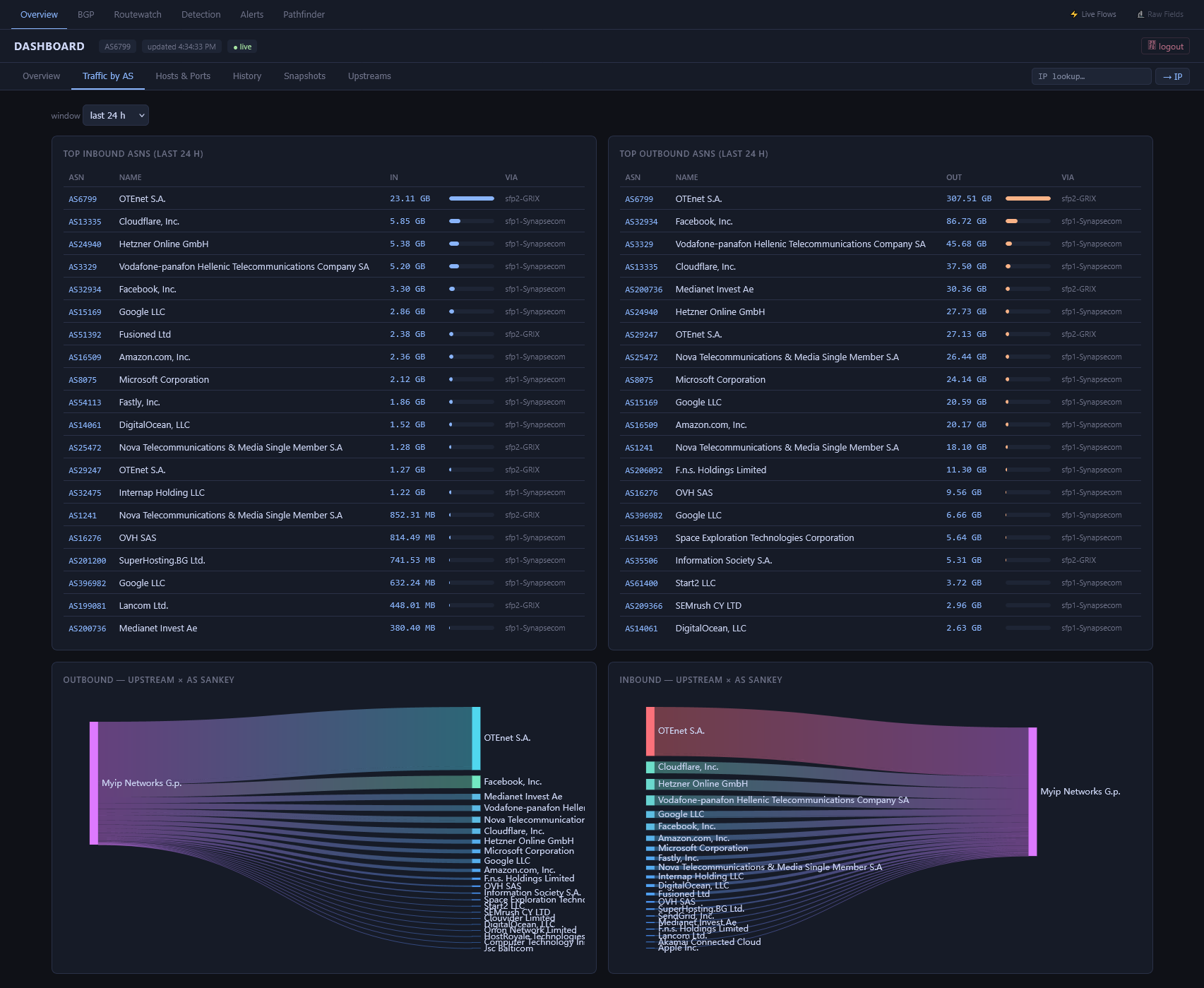

The same thing happened for ASN investigations.

Instead of a fragmented frontend calling multiple endpoints and stitching together partial data, Argus gained a unified ASN profile flow, then external ASN intelligence enrichment, and then a more resilient stale-while-revalidate model with per-provider status and async refresh behavior. That is a big maturity jump: the platform is no longer just showing raw internal data, it is combining local telemetry, BGP-derived visibility, cached context, and external intelligence into one operator-facing view. Again, that progression is visible in the recent PR sequence around the ASN profile handler and enrichment layer.

What I personally like most about this transition is that it reduces dependency without reducing capability.

The old “ClickHouse + Grafana + separate backend” style can absolutely work, and it often works well. But it also tends to spread the experience across multiple places. One system stores flows. Another system graphs them. Another place might hold auth. Another tool might give you investigation context. Another script might expose mitigation state.

Argus is pushing in the opposite direction.

The dashboard is embedded.

The auth is integrated.

The snapshots are stored locally.

The history is part of the same platform.

The drill-down pages are designed around the exact questions an operator asks during traffic analysis, detection review, or mitigation checks.

That makes the platform feel tighter, more opinionated, and honestly more useful.

It also changes the development mindset. Once the UI lives inside the same project as the engine, you stop thinking only in terms of collectors, enrichers, and APIs. You start thinking in full workflows:

How does an operator move from an overview table to an ASN?

How quickly can they pivot from an ASN to an IP?

How do they see routing context?

How do they distinguish local data from external intelligence?

How do they know whether data is fresh, stale, missing, or degraded?

How do snapshots and current live views complement each other?

Those questions shape the code differently.

So for me, the story of Argus is really this:

It began as a strong enrichment/detection backend.

It is evolving into a full network visibility and mitigation platform.

And that is the interesting part of the journey.

Not just that it has a nicer interface now.

Not just that it uses SQLite for snapshots/history/cache.

Not just that it has its own auth through goauth.

But that the whole project is moving from “engine with tooling around it” to “cohesive product with its own operational experience.”

That shift is where a lot of the value is.

And honestly, it feels like the right direction:

fewer external dependencies,

fewer disconnected parts,

faster investigations,

more ownership of the operator experience,

and a platform that reflects the way we actually work.

Argus is still growing, but this transition from flowenricher to Argus has probably been one of the most meaningful steps in the project so far.