In which I spend a Sunday morning asking a local AI to tell me whether emails about cheap Viagra are, in fact, about cheap Viagra. Spoiler: the 0.5b model cannot.

The Problem

SpamAssassin is great. Bayes is great. RBLs are great. But spam has gotten weird. Greek-language product spam from hacked domains with randomized subfolders pointing at QP-encoded HTML bodies using windows-1251 charset? Yeah. The ruleset struggles.

What if a locally-running LLM — no cloud, no API key, no data leaving the server — could act as an additional scorer? Not a replacement for SA, just another voice in the room. One that actually reads the email and has an opinion.

That’s what this post is about. By the end of it you’ll have:

- Ollama running on your server, locked to localhost

- A SpamAssassin plugin (

.pm+.cf) that calls it - Proper MIME decoding (QP, base64, windows-1251, you name it)

- URL extraction and analysis baked into the prompt

- A skip-if-already-obvious optimization so you don’t burn CPU on every piece of obvious Viagra spam

Hardware used: AMD EPYC 4545P, 92GB RAM. But honestly, anything with 8GB free RAM works.

Step 1: Install Ollama

Ollama is a single Go binary that serves local LLM models via HTTP. It installs cleanly on AlmaLinux/CloudLinux/cPanel without touching any packages.

What it does:

- Drops

/usr/local/bin/ollama - Creates a

systemdservice - Creates an

ollamasystem user - Stores models in

/usr/share/ollama

What it does not do: touch cPanel, EA4, Apache, MySQL, or anything you care about.

# Check disk first - models are a few GB

df -h /usr

# Check the port is free

ss -tlnp | grep 11434

# Install

curl -fsSL https://ollama.com/install.sh | sh

# Lock to localhost BEFORE starting - default binds 0.0.0.0

mkdir -p /etc/systemd/system/ollama.service.d

cat > /etc/systemd/system/ollama.service.d/override.conf <<'EOF'

[Service]

Environment="OLLAMA_HOST=127.0.0.1:11434"

Environment="OLLAMA_KEEP_ALIVE=5m"

EOF

systemctl daemon-reload

systemctl enable --now ollama

# Verify

curl -s http://127.0.0.1:11434/api/tagsOLLAMA_KEEP_ALIVE=5m is important. Without it, the model runner stays in memory and spins a CPU core at ~80-100% doing absolutely nothing useful. With it, the runner unloads after 5 minutes of inactivity and drops to 0%. It reloads on the next request in about 1-2 seconds.

To uninstall cleanly if you change your mind:

systemctl stop ollama && systemctl disable ollama

rm /usr/local/bin/ollama

rm /etc/systemd/system/ollama.service

rm -rf /usr/share/ollama

userdel ollamaStep 2: Pick a Model (We Tested Three)

We pulled three sizes of Qwen 2.5 and benchmarked them against synthetic ham, spam, and edge-case emails.

ollama pull qwen2.5:0.5b

ollama pull qwen2.5:3b

ollama pull qwen2.5:7bBenchmark Script

#!/bin/bash

OLLAMA="http://127.0.0.1:11434/api/generate"

MODELS=("qwen2.5:0.5b" "qwen2.5:3b" "qwen2.5:7b")

declare -A EMAILS

EMAILS[HAM_1]="Hi John, just confirming our meeting tomorrow at 10am. Let me know if you need to reschedule. Best, Maria"

EMAILS[HAM_2]="Your invoice #4821 is attached. Payment due in 30 days. Thank you for your business."

EMAILS[HAM_3]="Server alert: disk usage on node3 reached 85%. Please review /var/log for large files."

EMAILS[SPAM_1]="CONGRATULATIONS! You have been selected to receive a FREE iPhone 15! Click here NOW to claim your prize before it expires!!!"

EMAILS[SPAM_2]="Dear valued customer, your account has been suspended. Verify your details immediately at http://secure-bank-login.xyz/verify"

EMAILS[SPAM_3]="Buy cheap Viagra, Cialis online no prescription needed. Discreet shipping worldwide. Best prices guaranteed!!!"

EMAILS[SPAM_4]="Make money from home! Earn 5000 USD per week working just 2 hours a day. No experience needed."

EMAILS[EDGE_1]="Your domain is expiring soon. Please renew at your registrar to avoid service interruption."

EMAILS[EDGE_2]="Special offer for existing customers: 20% discount on your next order. Use code SAVE20 at checkout."

PROMPT='You are a spam filter. Rate this email as spam. Reply ONLY with a single decimal number between 0.0 (clean) and 1.0 (spam). No explanation.\n\nEmail:\n'

for MODEL in "${MODELS[@]}"; do

echo "--- Model: $MODEL ---"

for KEY in HAM_1 HAM_2 HAM_3 SPAM_1 SPAM_2 SPAM_3 SPAM_4 EDGE_1 EDGE_2; do

BODY="${EMAILS[$KEY]}"

START=$(date +%s%3N)

RESPONSE=$(curl -s -X POST "$OLLAMA" \

-H "Content-Type: application/json" \

-d "$(jq -n --arg model "$MODEL" --arg prompt "${PROMPT}${BODY}" \

'{model: $model, prompt: $prompt, stream: false, options: {temperature: 0}}'

)" | jq -r '.response // "ERR"')

END=$(date +%s%3N)

ELAPSED=$(echo "scale=2; ($END - $START) / 1000" | bc)

SCORE=$(echo "$RESPONSE" | grep -oP '[01]?\.\d+' | head -1)

printf "%-12s %-8s %s\n" "$KEY" "${SCORE:-???}" "${ELAPSED}s"

done

echo ""

doneResults (Prompt v1 — naive)

| Test | 0.5b | 3b | 7b | Expected |

|---|---|---|---|---|

| HAM_1 (meeting) | 0.9 | 0.9 | 0.2 | HAM ✅ only 7b |

| HAM_2 (invoice) | 0.9 | 0.9 | 0.6 | HAM ⚠️ |

| HAM_3 (server alert) | 0.9 | 0.9 | 0.6 | HAM ⚠️ |

| SPAM_1 (prize) | 0.9 | 1.0 | 1.0 | SPAM ✅ |

| SPAM_2 (phishing) | 0.9 | 0.9 | 0.9 | SPAM ✅ |

| SPAM_3 (Viagra) | 0.9 | 1.0 | 0.9 | SPAM ✅ |

| SPAM_4 (work from home) | 0.9 | 0.9 | 0.9 | SPAM ✅ |

| EDGE_1 (domain expiry) | 0.9 | 0.8 | 0.7 | EDGE ~ok |

| EDGE_2 (discount) | 0.9 | 0.8 | 0.6 | EDGE ~ok |

| Avg latency | 0.18s | 0.58s | 1.16s |

Verdict:

- 0.5b: Outputs 0.9 for literally everything. Does not care. Philosophically committed to 0.9.

- 3b: Catches spam but also thinks invoices are spam. Useless for our purpose.

- 7b: Actually reasoning. HAM_2/HAM_3 too high, but the structure is there. Fixable with prompt engineering.

Results (Prompt v2 — with few-shot anchors)

The fix: give the model explicit calibration examples so it knows what 0.05 and 0.99 actually look like.

| Test | Score | Time | Expected |

|---|---|---|---|

| HAM_1 (meeting) | 0.05 | 2.85s* | HAM ✅ |

| HAM_2 (invoice) | 0.10 | 0.86s | HAM ✅ |

| HAM_3 (server alert) | 0.05 | 0.87s | HAM ✅ |

| SPAM_1 (prize) | 0.98 | 0.93s | SPAM ✅ |

| SPAM_2 (phishing) | 0.92 | 0.84s | SPAM ✅ |

| SPAM_3 (Viagra) | 0.99 | 0.79s | SPAM ✅ |

| SPAM_4 (work from home) | 0.95 | 1.00s | SPAM ✅ |

| EDGE_1 (domain expiry) | 0.40 | 0.73s | EDGE ✅ |

| EDGE_2 (discount) | 0.45 | 0.83s | EDGE ✅ |

| Avg latency | — | 0.85s |

* Cold load penalty on first request after runner start. Sustained latency is ~0.85s.

Perfect across the board. qwen2.5:7b with Prompt v2 is the winner.

Step 3: The SpamAssassin Plugin

Two files: a Perl plugin (.pm) and a config file (.cf). No extra CPAN modules required — we use JSON::PP (Perl core) and shell out to curl instead of LWP.

/etc/mail/spamassassin/llm_classifier.cf

loadplugin LLMClassifier /etc/mail/spamassassin/llm_classifier.pm

header LLM_SPAM eval:llm_is_spam()

describe LLM_SPAM LLM classifier: likely spam (score >= 0.75)

score LLM_SPAM 1.0

priority LLM_SPAM 1000

header LLM_UNKNOWN eval:llm_is_unknown()

describe LLM_UNKNOWN LLM classifier: uncertain (score 0.25-0.74)

score LLM_UNKNOWN 0.0

priority LLM_UNKNOWN 1000

header LLM_HAM eval:llm_is_ham()

describe LLM_HAM LLM classifier: likely clean (score < 0.25)

score LLM_HAM -1.0

priority LLM_HAM 1000Priority 1000 ensures the LLM rules run after all Bayes, RBL, and header rules — so the skip-if-already-obvious logic has something to skip.

/etc/mail/spamassassin/llm_classifier.pm

package LLMClassifier;

use strict;

use Mail::SpamAssassin::Plugin;

use JSON::PP;

use Encode qw(decode);

use MIME::QuotedPrint qw(decode_qp);

our @ISA = qw(Mail::SpamAssassin::Plugin);

my $OLLAMA_URL = 'http://127.0.0.1:11434/api/generate';

my $MODEL = 'qwen2.5:7b';

my $TIMEOUT = 10;

my $PROMPT_PREFIX = <<'END';

You are an expert spam scoring engine. Output ONLY a single decimal number from 0.0 to 1.0.

0.0 = definitely legitimate email

0.5 = uncertain

1.0 = definitely spam

Scoring guidance:

STRONG SPAM signals:

- Links pointing to random subdomains or unrelated domains (e.g. nd.dikimux.help, secure-bank.xyz)

- Link domain completely unrelated to sender domain

- URL shorteners (bit.ly, tinyurl, t.co used to hide destination)

- Hacked sites used as redirectors (legitimate domain + random subfolder path like /tl-track6/)

- Sender domain does not match content context

- Excessive punctuation, ALL CAPS urgency

- Unrealistic offers (prizes, cheap drugs, work-from-home income)

- Phishing patterns (account suspended, verify now, click immediately)

- Product spam (hose, equipment, gadgets) sent to unrelated recipients

- Greek or foreign language spam advertising products with suspicious links

LEGITIMATE signals:

- Links match sender domain

- Professional business context (invoices, meetings, alerts)

- No deceptive links

- Consistent sender identity

EDGE cases (0.3-0.6):

- Marketing from known brands with unsubscribe links

- Domain expiry / hosting notices

- Discount offers from plausible senders

URLs found in email (analyze these carefully):

URLS_PLACEHOLDER

Email to score (subject + body):

END

sub new {

my ($class, $main) = @_;

my $self = $class->SUPER::new($main);

bless($self, $class);

$self->register_eval_rule('llm_is_spam');

$self->register_eval_rule('llm_is_unknown');

$self->register_eval_rule('llm_is_ham');

return $self;

}

# Decode a MIME part: handles QP/base64 encoding and charset conversion

sub _decode_part {

my ($part) = @_;

my $cte = lc($part->get_header('content-transfer-encoding') // '');

my $ct = $part->get_header('content-type') // '';

my $charset = 'UTF-8';

if ($ct =~ /charset=["']?([^"';\s]+)/i) { $charset = $1; }

my $raw = join('', @{$part->get_body() // []});

if ($cte =~ /quoted-printable/) {

$raw = decode_qp($raw);

} elsif ($cte =~ /base64/) {

require MIME::Base64;

$raw = MIME::Base64::decode_base64($raw);

}

my $decoded = eval { decode($charset, $raw) };

$decoded //= eval { decode('UTF-8', $raw) };

$decoded //= $raw;

# Strip HTML

$decoded =~ s/<[^>]+>//g;

$decoded =~ s/&[a-z]+;//gi;

$decoded =~ s/&#\d+;//g;

$decoded =~ s/\s+/ /g;

return $decoded;

}

sub _get_llm_score {

my ($self, $pms) = @_;

return $pms->{llm_score} if defined $pms->{llm_score};

# Skip if SA score already clearly decided - saves Ollama calls on obvious spam/ham

# Note: $pms->{score} contains the running total accumulated so far (priority 1000 = runs last)

my $current_score = $pms->{score} // 0;

if ($current_score >= 3.0) {

$pms->{llm_score} = -1;

Mail::SpamAssassin::Plugin::dbg("LLMClassifier: skipping, score already $current_score (>=3.0)");

return -1;

}

if ($current_score <= -2.0) {

$pms->{llm_score} = -1;

Mail::SpamAssassin::Plugin::dbg("LLMClassifier: skipping, score already $current_score (<=-2.0)");

return -1;

}

my $subject = $pms->get('Subject') // '(no subject)';

my $from = $pms->get('From') // '(unknown)';

# Extract and decode body - walk MIME parts with charset handling

my $body = '';

my $msg = $pms->get_message();

my @parts = $msg->find_parts(qr/^text\//i, 1);

if (!@parts) {

my $raw_ref = $msg->get_body();

$body = defined $raw_ref ? join('', @{$raw_ref}) : '';

} else {

for my $part (@parts) {

$body .= _decode_part($part) . "\n";

}

}

# Extract URLs from raw message before HTML stripping

my $raw_body = join('', @{$msg->get_body() // []});

my @urls = ($raw_body =~ m{https?://[^\s<"')\]]+}gi);

my %seen; @urls = grep { !$seen{$_}++ } @urls;

$body = substr($body, 0, 2000);

my $url_list = @urls ? join("\n", @urls) : "(none found)";

my $prompt = $PROMPT_PREFIX;

$prompt =~ s/URLS_PLACEHOLDER/$url_list/;

$prompt .= "From: $from\nSubject: $subject\n\n$body";

my $payload = encode_json({

model => $MODEL,

prompt => $prompt,

stream => JSON::PP::false,

options => { temperature => 0 },

});

# Write to tempfile to avoid shell quoting nightmares

my $tmpfile = "/tmp/llm_sa_$$.json";

open(my $fh, '>', $tmpfile) or do { $pms->{llm_score} = -1; return -1; };

print $fh $payload;

close($fh);

my $result = eval {

local $SIG{ALRM} = sub { die "timeout\n" };

alarm($TIMEOUT);

my $out = `curl -s -m $TIMEOUT -X POST '$OLLAMA_URL' -H 'Content-Type: application/json' -d \@$tmpfile 2>/dev/null`;

alarm(0);

$out;

};

alarm(0);

unlink($tmpfile);

if ($@ || !defined $result || $result eq '') {

$pms->{llm_score} = -1;

return -1;

}

my $data = eval { decode_json($result) };

my $reply = $data->{response} // '';

my ($score) = ($reply =~ /([01](?:\.\d+)?|\.\d+)/);

$pms->{llm_score} = defined $score ? $score + 0 : -1;

$pms->set_tag('LLM_SCORE', sprintf("%.2f", $pms->{llm_score})) if $pms->{llm_score} >= 0;

return $pms->{llm_score};

}

sub llm_is_spam { my ($self,$pms)=@_; my $s=$self->_get_llm_score($pms); return ($s>=0 && $s>=0.75)?1:0 }

sub llm_is_unknown { my ($self,$pms)=@_; my $s=$self->_get_llm_score($pms); return ($s>=0.25 && $s<0.75)?1:0 }

sub llm_is_ham { my ($self,$pms)=@_; my $s=$self->_get_llm_score($pms); return ($s>=0 && $s<0.25)?1:0 }

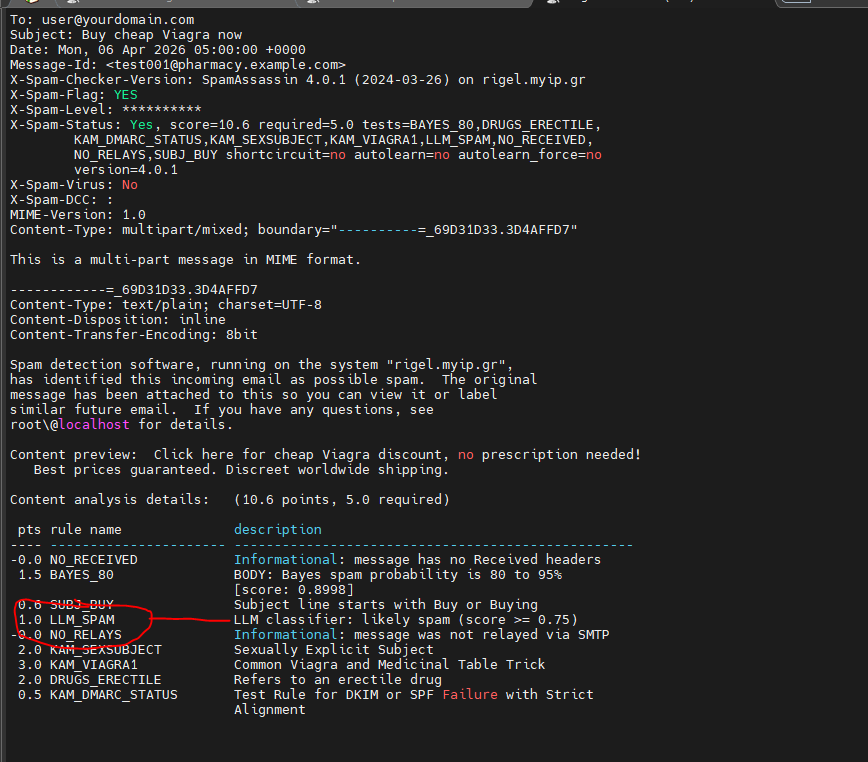

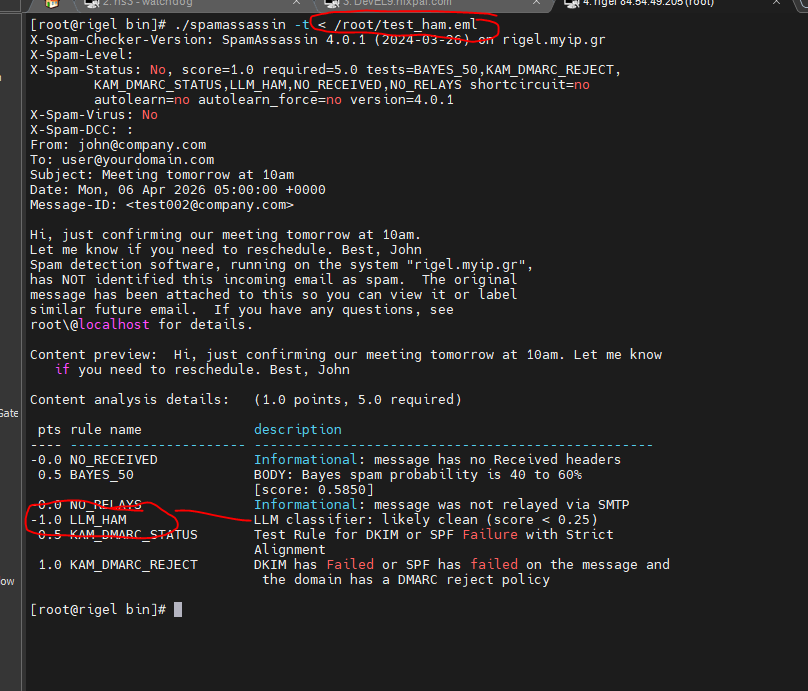

1;Step 4: Deploy and Test

# Lint check - always do this before restarting spamd

spamassassin --lint 2&&1

# Restart spamd (cPanel)

/scripts/restartsrv_spamd

# Test with synthetic emails

cat > /root/test_spam.eml << 'EOF'

From: deals@pharmacy.example.com

To: user@yourdomain.com

Subject: Buy cheap Viagra now

Date: Mon, 06 Apr 2026 05:00:00 +0000

Message-ID: <test001@pharmacy.example.com>

Click here for cheap Viagra discount, no prescription needed!

Best prices guaranteed. Discreet worldwide shipping.

EOF

cat > /root/test_ham.eml << 'EOF'

From: john@company.com

To: user@yourdomain.com

Subject: Meeting tomorrow at 10am

Date: Mon, 06 Apr 2026 05:00:00 +0000

Message-ID: <test002@company.com>

Hi, just confirming our meeting tomorrow at 10am.

Let me know if you need to reschedule. Best, John

EOF

spamassassin -t < /root/test_spam.eml | grep LLM

spamassassin -t < /root/test_ham.eml | grep LLMExpected output:

# Spam email:

1.0 LLM_SPAM LLM classifier: likely spam (score >= 0.75)

# Ham email:

-1.0 LLM_HAM LLM classifier: likely clean (score < 0.25)

# Ham email:

-1.0 LLM_HAM LLM classifier: likely clean (score < 0.25)

You’ll also get an X-Spam-LLM-Score header on every scored message showing the raw float, useful for tuning.

How It Works in Practice

The scoring flow for each message:

- SA runs all its normal rules: Bayes, RBLs, header checks, DKIM, URI lists, etc.

- At priority 1000 (last), our LLM rules fire.

- If the accumulated SA score is already >= 3.0 — it’s obviously spam, skip Ollama entirely.

- If score <= -2.0 — clearly trusted, skip.

- Otherwise (the uncertain zone) — call Ollama, get a float back, add it to SA’s score.

The LLM contributes:

- +1.0 if it thinks spam (score ≥ 0.75)

- 0.0 if uncertain (0.25–0.74)

- -1.0 if it thinks ham (score < 0.25)

At these weights it’s a tiebreaker, not a dictator. Once you’ve run it for a week and trust it, bump LLM_SPAM to 2.5 and LLM_HAM to -2.0.

Lessons Learned

Model size matters enormously for reasoning tasks. The 0.5b model is a parrot. The 7b model actually reads the email. There’s no middle ground here — 3b is worse than 7b in a way that matters for this use case.

Prompt engineering is 80% of the work. The difference between “0.9 for everything” and “0.05/0.99 perfect discrimination” was entirely in how we wrote the prompt. Few-shot examples with explicit numeric anchors are mandatory.

get_score() in SpamAssassin does not return what you think during eval. Use $pms->{score} directly. The SA internals accumulate into the hashref; the method may return stale or zero values depending on evaluation phase.

MIME encoding is a mess and always has been. Greek spam arrives as QP-encoded windows-1251. Without proper decoding the LLM sees =CE=97 =CF=80=CE=BB=CE=AE=CF=81=CE=B7=CF=82 and has no idea what to do with it. Always decode the part, decode the charset, strip HTML entities, then feed to the model.

Ollama’s idle CPU spin is real but manageable. Set OLLAMA_KEEP_ALIVE=5m and accept the 1-2s cold start on the first email after idle. For a mail server with bursty traffic patterns, this is the right tradeoff.

The LLM and Bayes agree more than they disagree. When they do agree, confidence in the result is high. When they disagree, that’s a signal worth investigating — it usually means the email is genuinely ambiguous or a novel attack pattern.

Tuning Reference

| Parameter | Location | Default | Notes |

|---|---|---|---|

| Skip threshold (spam) | .pm line ~85 |

3.0 | Lower = fewer Ollama calls |

| Skip threshold (ham) | .pm line ~91 |

-2.0 | More negative = fewer skips on trusted mail |

| LLM_SPAM score | .cf |

1.0 | Raise to 2.5 after burn-in |

| LLM_HAM score | .cf |

-1.0 | Lower to -2.0 after burn-in |

| Spam threshold | .pm line ~125 |

0.75 | LLM score above this = spam hit |

| Ham threshold | .pm line ~127 |

0.25 | LLM score below this = ham hit |

| Body truncation | .pm line ~110 |

2000 chars | Increase for longer emails |

| Timeout | .pm line ~14 |

10s | SA blocks during this wait |

| Keep-alive | systemd override | 5m | How long runner stays in RAM |

| Model | .pm line ~13 |

qwen2.5:7b | Bigger = better, slower |

Running on Rigel — AMD EPYC 4545P / AlmaLinux 9 / cPanel. The model thinks invoice emails are clean and Viagra ads are not. We’ve achieved consensus with the AI on at least one thing.